SQL in R - Football Data

Operating a simple SQL database in R to store and access Understat shot data

It’s been a while since I put anything together, however opening up Substack again reminds me I have a host of half written pieces in my drafts…so time to complete them!

Anyway, the twitter football analytics community has progressed considerably with some impressive hires. Where data was once limited, there are now many resources for both R and Python to develop football analytics in the public domain acting as a visible, online CV. Consequently, clubs and data companies are picking up those analysts which are showcasing their skills - no better example of this than in Mark Thompson’s tweet.

With the hires come a range of job specs detailing a club expectations, with requirements such as this common place. There is much focus on developing coding skills and transferring this to interpretable visualisations, however, database management is becoming more prominent, primarily, SQL.

Prior to jumping into the R black hole, I had no coding experience. This applies to SQL. Luckily, R has a workflow to interact with SQL databases without leaving Rstudio.

As always, this is something that *seems* to work but fully acknowledge it could be error strewn! None the less, the rough plan:

Download Understat x/y shot data

Create an SQL database

Copy Understat data to the database

Access and query the database

Database, database, database.

Rstudio is required to complete the following, an outline of which here.

As always, start by loading the required packages:

library(tidyverse)

library(glue)

library(DBI) ##install.packages("DBI")

library(RSQLite) ##install.packages("RSQLite")

library(here) ##install.packages("here")A quick package breakdown:

DBI - connects R to a database

RSQLite - enables SQL to run in R along with DBI

here - reliable path to save output

Get the data

The data used to populate the created database will be the x/y shot data in these 35 .csv files for the top 5 European Leagues 2014-2020 from Understat.

##league names ----

leagues <- c("bundesliga",

"epl",

"la_liga",

"ligue_1",

"serie_a")

##years ----

year <- 2014:2020Now using ‘crossing’ the variations can be created:

##create league and year combinations ----

data <- crossing(leagues, year) %>%

mutate(join = glue::glue("{leagues}_{year}"))

dataThere should be a tibble output of the 35 league and year combinations:

The file path is consistent aside from the league and year (copy and pasting the example below urls will output the raw files):

https://raw.githubusercontent.com/Markjwilkins/Understat/main/Shot%20Data%20by%20Year/epl_2014.csv

https://raw.githubusercontent.com/Markjwilkins/Understat/main/Shot%20Data%20by%20Year/epl_2015.csv

The intention is to iterate over each variable retrieving the file. A quick function:

##create function to pull all understat .csv files ----

csv_files <- function(file_name) {

readr::read_csv(url(

glue::glue('https://raw.githubusercontent.com/Markjwilkins/Understat/main/Shot%20Data%20by%20Year/{file_name}.csv'

)

))

}Now the fun stuff, grabbing the data:

##run function for each .csv creating a list ----

df <- data$join %>%

set_names(.) %>%

map(csv_files)A few lines, but a fair amount going on:

Using data$join will name the output lists by the league name and year (set_names(.)) before applying the csv_files function using purrr::map to iterate over each file.

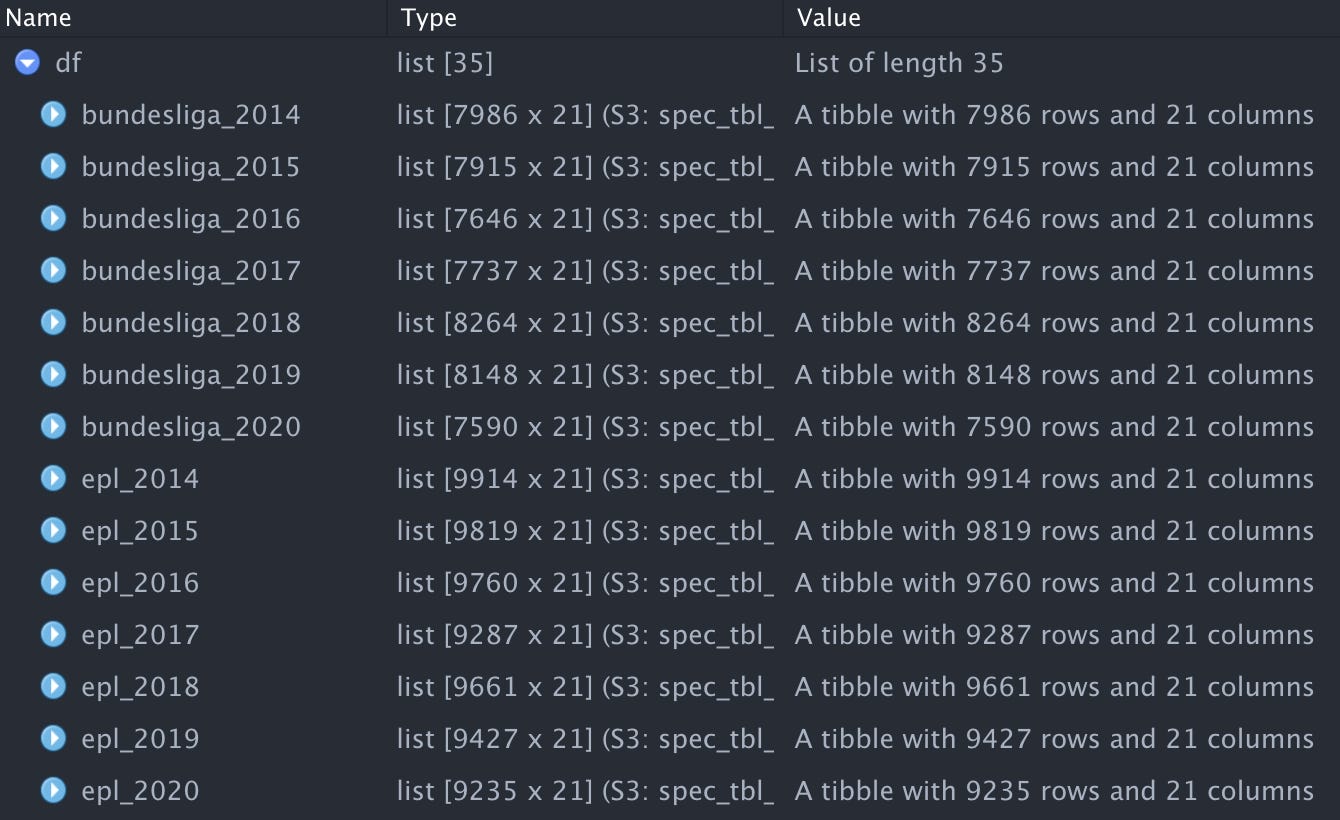

A sample of the output should be similar to the following:

Within the list theres nested tibbles holding the shot data for the given league and year. By typing df[["bundesliga_2014"]] you can access the specific dataframe.

We now have ~317,000 shots! Yay!

Create the database

As we have the data, the database can be created. The next part isn’t essential but checking where the database will be saved is probably handy - using the here package makes the process straight forward. To check the directory simply use here::here().

Create the database:

##create .db ----

con <- dbConnect(drv = RSQLite::SQLite(),

here::here("understat_shot.db"))

conDone!

Add data to the database

Finally, to add the data to the empty database and check the data now exists! Again, using purrr, this time map2() to “iterate over multiple arguments simultaneously”.

##copy understat data to new .db ----

purrr::map2(data$join, df, ~ dbWriteTable(con, .x, .y))

##check data exists in .db ----

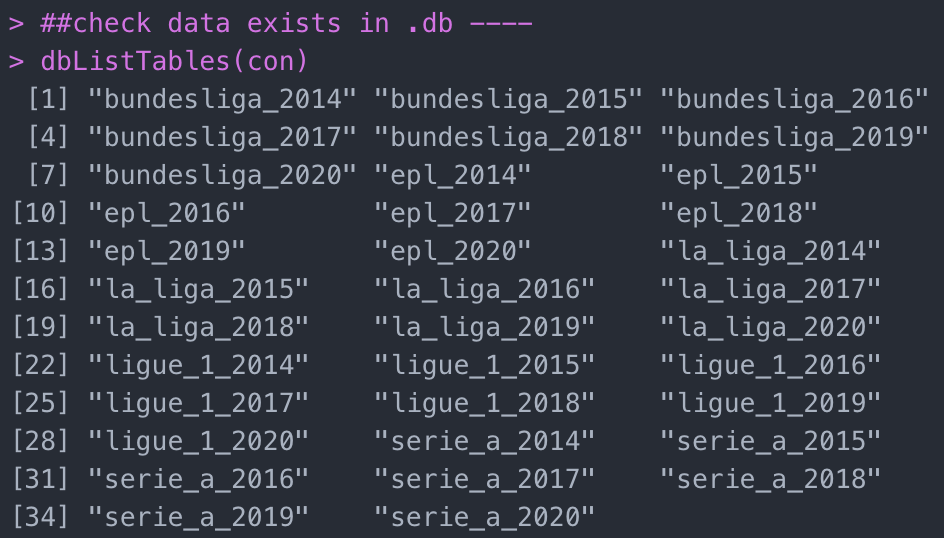

dbListTables(con) At this point there should be 35 tables when accessing dbListTables :

Query the database

Finally, to query the database. To output a tibble of a selected league:

##query .db ----

x <- tbl(con, "epl_2018")

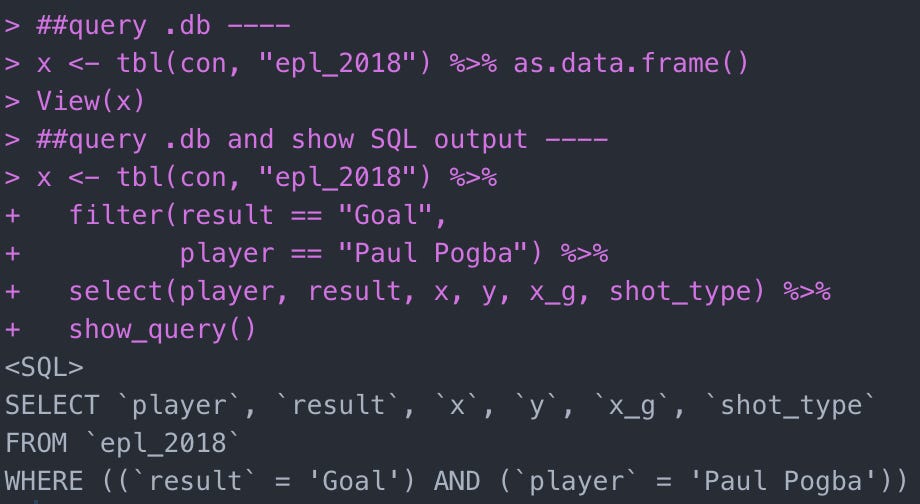

xTo take this a step further, there is the ability to convert R code to SQL. This obviously has a variety of uses from learning SQL to communicating with those working in SQL.

##query .db and show SQL output ----

x <- tbl(con, "epl_2018") %>%

filter(result == "Goal",

player == "Paul Pogba") %>%

select(player, result, x, y, x_g, shot_type) %>%

show_query()The output:

Oooooooo some SQL. By using dbGetQuery() this can applied in the opposing direction from SQL to R.

To combine all tables in the database:

##all data ----

tables <- dbListTables(con)

all_data <- map_df(tables, dbReadTable, conn = con)

all_dataTo this point, the database has been queried providing a snapshot of what is contained. To fully retrieve data from the database into our R session, collect() can be added to the pipe (a simple example):

##retrieve data into R session

final <-

all_data %>%

filter(player == "Memphis Depay") %>%

select(result, player, x_g, x, y, season) %>%

mutate(x = x*100,

y = y*100) %>%

collect()Finally, remember to close the database connection:

##close .db connection

dbDisconnect(con)In the future, to access the SQL database and the data added we can re-open the connection:

##open .db ----

con <- dbConnect(drv = RSQLite::SQLite(),

here::here("understat_shot.db"))Lovely stuff. By accessing the newly created SQL database in the future you have ~317k+ of shot data immediately available to work with as you wish. This can be applied to many scenarios and allow you to integrate SQL into your workflow.

Hopefully this has been of interest and some use! A natural next step would be to automate the updating of the database weekly with most recent fixture data. Maybe that will be the next piece?

As always, let me know if you have any questions!

Full code on github.

The full code:

# Load packages -----------------------------------------------------------

library(tidyverse)

library(glue)

library(progress) ##install.packages("progress")

library(DBI) ##install.packages("DBI")

library(RSQLite) ##install.packages("RSQLite")

library(here) ##install.packages("here")

##league names ----

leagues <- c("bundesliga",

"epl",

"la_liga",

"ligue_1",

"serie_a")

##years ----

year <- 2014:2020

##create league and year combinations ----

data <- crossing(leagues, year) %>%

mutate(join = glue::glue("{leagues}_{year}"))

##create progress bar ----

pb <- progress::progress_bar$new(total = length(data$join),

format = "Downloading :[:bar] :percent eta: :eta")

##create function to pull understat all .csv files ----

csv_files <- function(file_name) {

pb$tick() ##show progress

readr::read_csv(url(

glue::glue('https://raw.githubusercontent.com/Markjwilkins/Understat/main/Shot%20Data%20by%20Year/{file_name}.csv'

)

))

}

##run function for each csv creating a list ----

df <- data$join %>%

set_names(.) %>%

map(csv_files)

# Create SQL db -----------------------------------------------------------

##check where .db will be saved ----

here::here()

##create .db ----

con <- dbConnect(drv = RSQLite::SQLite(),

here::here("understat_shot.db"))

##copy understat data to new .db ----

purrr::map2(data$join, df, ~ dbWriteTable(con, .x, .y))

##check data exists in .db ----

dbListTables(con)

##query .db ----

x <- tbl(con, "epl_2018")

##query .db and show SQL output ----

x <- tbl(con, "epl_2018") %>%

filter(result == "Goal",

player == "Paul Pogba") %>%

select(player, result, x, y, x_g, shot_type) %>%

show_query()

##all data ----

tables <- dbListTables(con)

all_data <- map_df(tables, dbReadTable, conn = con)

all_data

##retrieve data into R session

final <-

all_data %>%

filter(player == "Memphis Depay") %>%

select(result, player, x_g, x, y, season) %>%

mutate(x = x*100,

y = y*100) %>%

collect()

##close .db connection

dbDisconnect(con)